Goals:

As aforementioned, the flow subsystem is the integral part of ONOS that functions to realize the Intents into flow rules that can be installed onto Openflow switches. In addition, applications can also directly call on its API to inject flow rules. It is in the critical path of the performances when applications use the Northbound API and the intent framework. This experiment should provide us a with additional performance breakdown from the end-to-end Intent performance, as well as what applications can expect when directly interfacing with the flow rule system.

Setup and Method:

For generating a batch of flow rules to be installed and removed by ONOS, we use the "flow-tester.py" utility that is implemented as part of the ONOS tools (under $ONOS_ROOT/tools/test/bin). This tool when executed will cause ONOS to install a set of flow rules onto the devices under controlled. It returns a response time when all flow rules installed successfully. The tool also accepts a number of parameters to vary how the test can be run (see help page on the command for more details):

- number of flow rules per switch;

- number of Neighbors - the number of ONOS nodes (other than the local ONOS node running the tool) to where the local ONOS node is required to send the flow rules to because the switch masterships is not local;

- number of Servers - the number of ONOS nodes running this tool, i.e. generating the flow rules.

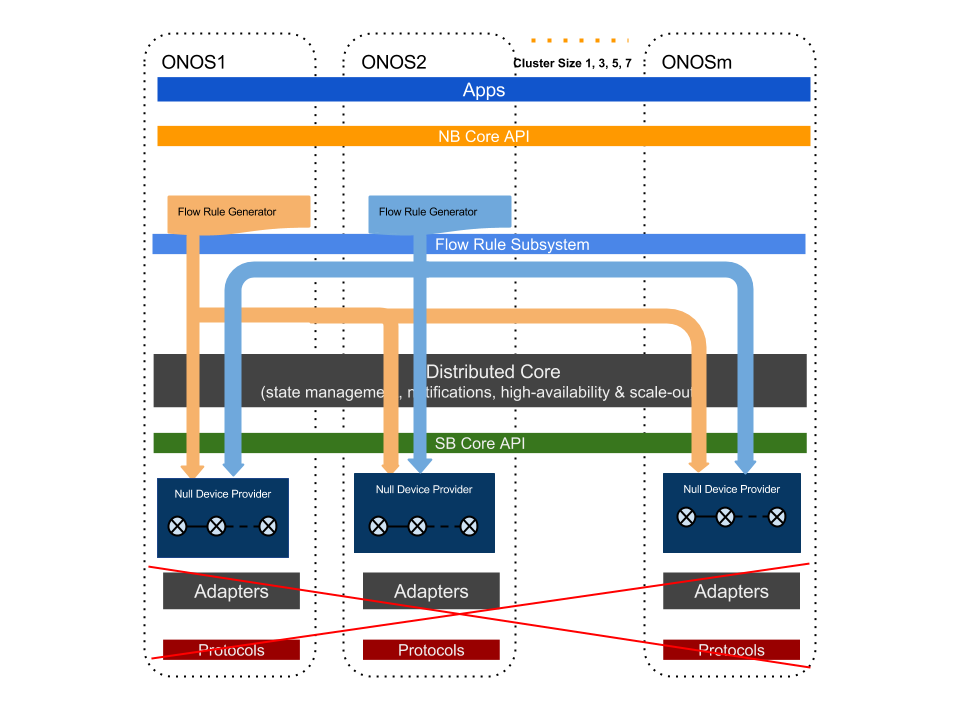

The following diagram depicts the general setup. The example setup in this diagram shows ONOS1 and ONOS2 are the two servers running the tool to generate flows; when both server generating flows with two Neighbors, i.e. the flow rules generated are to be pass to two neighboring nodes for installation (because the flows belongs to switches with neighboring node masterships.)

We enable Null Providers to be the consumer of the flow rules, bypassing Openflow Adaptors and the potential performance limitation of using real or emulated switches.

Specific to this experiment we run for this release, we use the following parameters:

- The total number of Null Devices used is a constant, 35 - their masterships are "equally" assigned to all nodes in the cluster, ex. when running a 5-node cluster, each node has masterships of seven devices;

- The total number of flow rules to install for the cluster is 122,500 - this number is chosen so that it is large enough a size, and also easily split to the cluster sizes under test. From there we calculate the number of flows to be install on each switch as a argument for the "flow-tester.py" tool.

- We test two most relevant scenarios: 1) when number of Neighbors is zero, which is the case where all flow rules are to be install locating on the generating node; 2) when number of Neighbors is (cluster size -1), i.e. each node generator generate flow rule for itself and all other nodes in the cluster.

- We run the experiment with cluster size of 1, 3, 5, 7.

- The response time is gathered with a statistical integration of 20 (after 4 warm-up runs).

Note: At the time of release, ONOS core still utilizes Hazelcast as the store protocol for backing up flow rules. Experiments showed that using this back up can cause high variation of the flow rule burst install rate. In this experiment, we run this set of experiments with flow rule backup turned off from the released code (under "$ONOS_ROOT/core/store/dist/src/main/java/org/onosproject/store/flow/impl/DistributedFlowRuleStore.java)

Results:

Analysis and Conclusions:

A few system performance conclusions can be drawn:

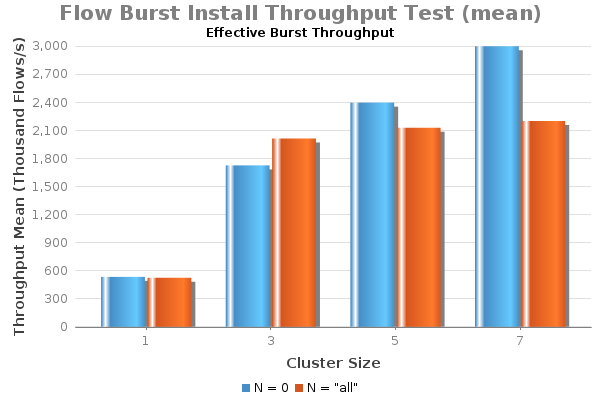

- Data shows higher overall throughput of the system when configured with N=0 compared with N="all". One can conclude that the flow subsystem performance is higher when flow rules generated stay for local node to install on devices whose mastership is the local node. Because of overhead/bottleneck exists on EW-wise communication, the performance with N="all" is lower, as expected.

- The overall performance with both cases scale with the number of nodes of the cluster - a clear scale-out effect. But this effect is non-linear. The leveling off of increases of performance is worse when neighboring communication is required, i.e. N="all", compared with when N=0.

- Similar performance numbers attained from N=0 and N="all" indicate that the EW-wise communication does not limit ONOS intent operation performance.