Table of Contents

Introduction

Note that this instructions assume you’re familiar with ONOS and OpenStack, and do not provide a guide to how to install or trouble shooting these services. However, If you aren’t, please find a guide from ONOS(http://wiki.onosproject.org) and OpenStack(http://docs.openstack.org), respectively.

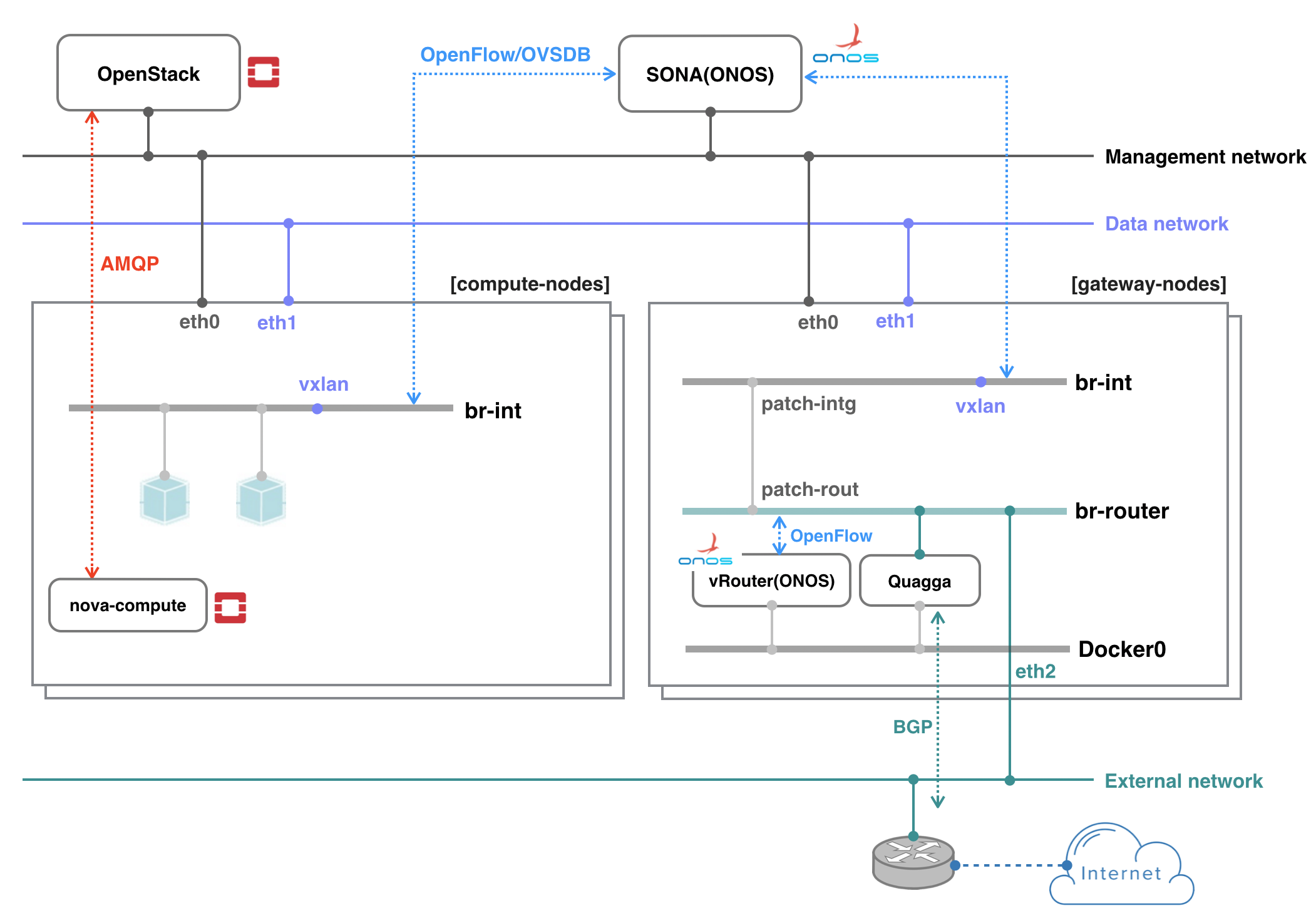

The example deployment depicted in the above figure uses three networks with an external router.

- Management network: used for ONOS to control virtual switches, and OpenStack to communicate with nova-compute agent running on the compute node

- Data network: used for East-West traffic via VXLAN tunnel

- External network: used for North-South traffic, normally only gateway nodes have an access to this network

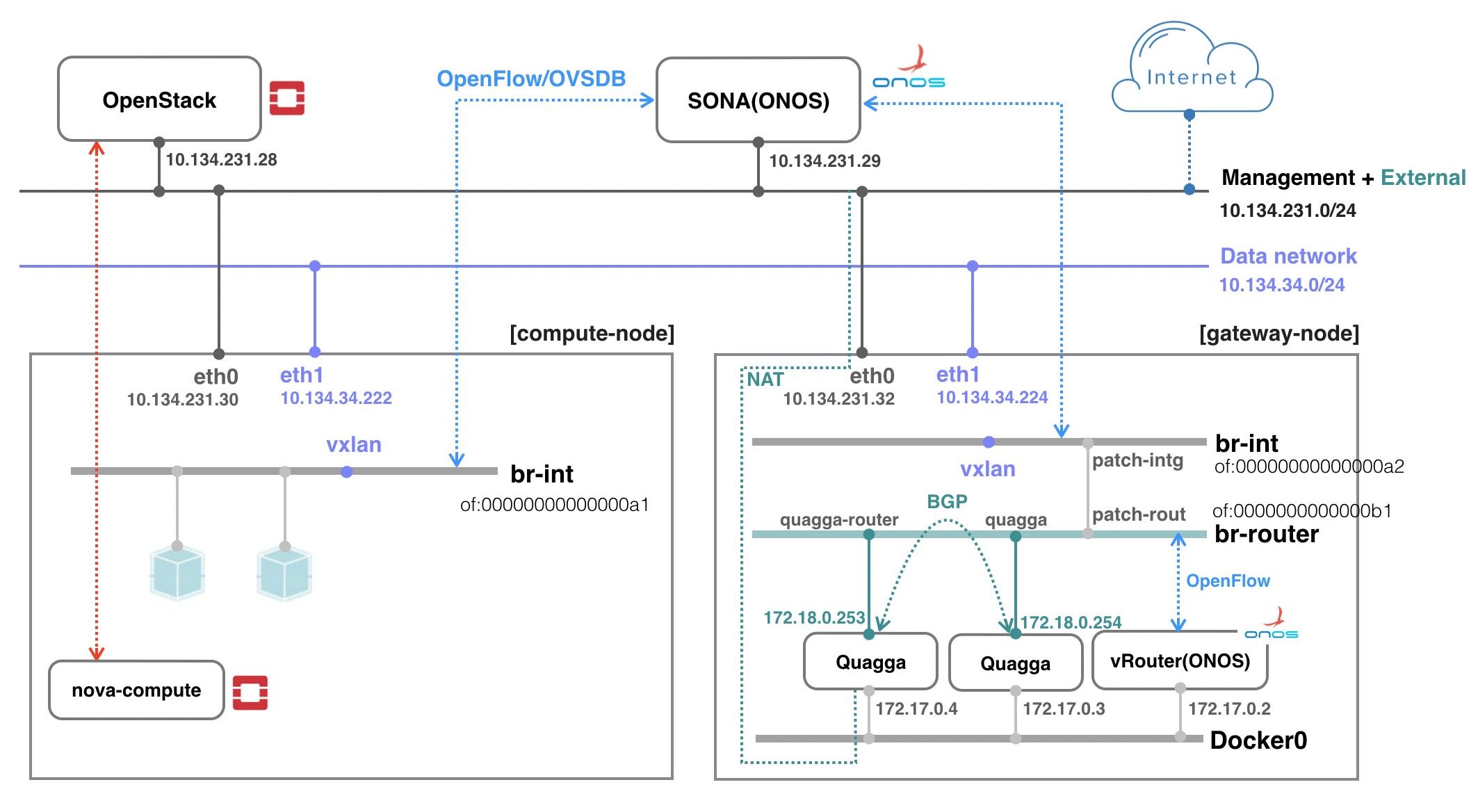

All networks can share a network interface in case your test machine does not have enough interfaces. You can also emulate external router. The figure below shows an example test environment used in the rest of this guide with emulated external router and two network interfaces, one for sharing management and external, and the other for data.

Prerequisite

1. Install OVS to all nodes including compute and gateway. Make sure your OVS version is 2.3.0 or later (later than 2.5.0 is recommended). Refer to this guide for updating OVS (don't forget to change the version in the guide).

2. Set OVSDB listening mode. Note that "compute_node_ip" in the below command should be an address accessible from the ONOS instance.

$ ovs-appctl -t ovsdb-server ovsdb-server/add-remote ptcp:6640:[compute_node_ip]

If you want to make the setting permanent, add the following line to /usr/share/openvswitch/scripts/ovs-ctl, right after "set ovsdb-server "$DB_FILE" line. You'll need to restart the openvswitch-switch service after that.

set "$@" --remote=ptcp:6640

Now you should be able to see port "6640" is in listening state.

$ netstat -ntl Active Internet connections (only servers) Proto Recv-Q Send-Q Local Address Foreign Address State tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN tcp 0 0 0.0.0.0:6640 0.0.0.0:* LISTEN tcp6 0 0 :::22

3. Check your OVS state. It is recommended to clean up all stale bridges used in OpenStack including br-int, br-tun, and br-ex if there is any. Note that SONA will add required bridges via OVSDB once it is up.

$ sudo ovs-vsctl show

cedbbc0a-f9a4-4d30-a3ff-ef9afa813efb

ovs_version: "2.5.0"

OpenStack Setup

How to deploy OpenStack is out of scope of this documentation. Here, it only describes configurations related to use SONA. All other settings are completely up to your environment.

Controller Node

1. Install networking-onos (Neutron ML2 plugin for ONOS) first.

$ git clone https://github.com/openstack/networking-onos.git $ pip install ./networking-onos

2. Edit ml2_conf_onos.ini uner networking-onos/etc/neutron/plugins/ml2 for ONOS endpoint and credential. You may want to copy the config file under /etc/neutron/plugins/ml2/ where other Neutron configuration files are.

# Configuration options for ONOS ML2 Mechanism driver [onos] # (StrOpt) ONOS ReST interface URL. This is a mandatory field. url_path = http://10.134.231.29:8181/onos/openstacknetworking # (StrOpt) Username for authentication. This is a mandatory field. username = onos # (StrOpt) Password for authentication. This is a mandatory field. password = rocks

URL path is changed from "onos/openstackswitching" to "onos/openstacknetworking" since 1.8.0.

3. Next step is installing and running OpenStack services. For DevStack users, use the following sample DevStack local.conf to build OpenStack controller node. Make sure your DevStack branch is consistent with the OpenStack branch, "stable/mitaka" for example.

$ git clone -b stable/mitaka https://git.openstack.org/openstack-dev/devstack

[[local|localrc]] HOST_IP=10.134.231.28 SERVICE_HOST=10.134.231.28 RABBIT_HOST=10.134.231.28 DATABASE_HOST=10.134.231.28 Q_HOST=10.134.231.28 ADMIN_PASSWORD=nova DATABASE_PASSWORD=$ADMIN_PASSWORD RABBIT_PASSWORD=$ADMIN_PASSWORD SERVICE_PASSWORD=$ADMIN_PASSWORD SERVICE_TOKEN=$ADMIN_PASSWORD DATABASE_TYPE=mysql # Log SCREEN_LOGDIR=/opt/stack/logs/screen # Images FORCE_CONFIG_DRIVE=True # Networks Q_ML2_TENANT_NETWORK_TYPE=vxlan Q_ML2_PLUGIN_MECHANISM_DRIVERS=onos_ml2 Q_PLUGIN_EXTRA_CONF_PATH=/opt/stack/networking-onos/etc/neutron/plugins/ml2 Q_PLUGIN_EXTRA_CONF_FILES=(ml2_conf_onos.ini) ML2_L3_PLUGIN=onos_router NEUTRON_CREATE_INITIAL_NETWORKS=False # Services enable_service q-svc disable_service n-net disable_service n-cpu disable_service tempest disable_service c-sch disable_service c-api disable_service c-vol # Branches GLANCE_BRANCH=stable/mitaka HORIZON_BRANCH=stable/mitaka KEYSTONE_BRANCH=stable/mitaka NEUTRON_BRANCH=stable/mitaka NOVA_BRANCH=stable/mitaka

If you use other deployment tool or build OpenStack manually, refer to the following Nova and Neutron configurations.

core_plugin = onos_ml2 service_plugins = onos_router dhcp_agent_notification = False

[ml2] tenant_network_types = vxlan type_drivers = vxlan mechanism_drivers = onos_ml2 [securitygroup] enable_security_group = True

Set Nova to use config drive for metadata service, so that we don't need to run Neutron metadata-agent. And of course, set Neutron for network service.

[DEFAULT] force_config_drive = True network_api_class = nova.network.neutronv2.api.API security_group_api = neutron [neutron] url = http://10.134.231.28:9696 auth_strategy = keystone admin_auth_url = http://10.134.231.28:35357/v2.0 admin_tenant_name = service admin_username = neutron admin_password = [admin passwd]

Don't forget to set ml2_conf_onos.ini when you start Neutron service.

/usr/bin/python /usr/local/bin/neutron-server --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini --config-file /opt/stack/networking-onos/etc/neutron/plugins/ml2/ml2_conf_onos.ini

Compute node

No special configurations are required for compute node other than setting network api to Neutron. For DevStack users, here's a sample DevStack local.conf.

[[local|localrc]] HOST_IP=10.134.231.30 SERVICE_HOST=10.134.231.28 RABBIT_HOST=10.134.231.28 DATABASE_HOST=10.134.231.28 ADMIN_PASSWORD=nova DATABASE_PASSWORD=$ADMIN_PASSWORD RABBIT_PASSWORD=$ADMIN_PASSWORD SERVICE_PASSWORD=$ADMIN_PASSWORD SERVICE_TOKEN=$ADMIN_PASSWORD DATABASE_TYPE=mysql NOVA_VNC_ENABLED=True VNCSERVER_PROXYCLIENT_ADDRESS=$HOST_IP VNCSERVER_LISTEN=$HOST_IP LIBVIRT_TYPE=kvm # Log SCREEN_LOGDIR=/opt/stack/logs/screen # Services ENABLED_SERVICES=n-cpu,neutron # Branches NOVA_BRANCH=stable/mitaka KEYSTONE_BRANCH=stable/mitaka NEUTRON_BRANCH=stable/mitaka

If your compute node is a VM, try http://docs.openstack.org/developer/devstack/guides/devstack-with-nested-kvm.html this first or set LIBVIRT_TYPE=qemu. Nested KVM is much faster than qemu, if possible.

For manual set up, set Neutron as a network API in the Nova configuration.

[DEFAULT] force_config_drive = True network_api_class = nova.network.neutronv2.api.API security_group_api = neutron [neutron] url = http://10.134.231.28:9696 auth_strategy = keystone admin_auth_url = http://10.134.231.28:35357/v2.0 admin_tenant_name = service admin_username = neutron admin_password = [admin passwd]

Gateway node

No OpenStack service is required for a gateway node.

ONOS-SONA Setup

1. Refer to SONA Network Configuration Guide and write a network configuration file, typically named network-cfg.json. Place the configuration file under tools/package/config/, build, create package, and then install ONOS. Please note that it needs additional steps for building and installing openstackNetworking application due to the application does not fully support Buck build yet.

# SONA cluster (1-node) export OC1=onos-01 export ONOS_APPS="drivers,openflow-base"

onos$ buck build onos onos$ cp ~/network-cfg.json ~/onos/tools/package/config/ onos$ onos-package onos$ stc setup onos$ onos-buck-publish-local onos$ onos-buck publish --to-local-repo //protocols/ovsdb/api:onos-protocols-ovsdb-api onos$ onos-buck publish --to-local-repo //protocols/ovsdb/rfc:onos-protocols-ovsdb-rfc onos$ onos-buck publish --to-local-repo //apps/openstacknode:onos-apps-openstacknode onos$ cd apps/openstacknetworking; mci; onos$ onos-app $OC1 reinstall! target/onos-app-openstacknetworking-1.10.0-SNAPSHOT.oar

2. Check all applications are activated successfully.

onos> apps -a -s * 9 org.onosproject.ovsdb-base 1.10.0.SNAPSHOT OVSDB Provider * 13 org.onosproject.optical-model 1.10.0.SNAPSHOT Optical information model * 20 org.onosproject.drivers 1.10.0.SNAPSHOT Default device drivers * 39 org.onosproject.drivers.ovsdb 1.10.0.SNAPSHOT OVSDB Device Drivers * 47 org.onosproject.openflow-base 1.10.0.SNAPSHOT OpenFlow Provider * 56 org.onosproject.openstacknode 1.10.0.SNAPSHOT OpenStack Node Bootstrap App * 57 org.onosproject.openstacknetworking 1.10.0.SNAPSHOT OpenStack Networking App

3. Check all nodes are registered and all COMPUTE type node's states are COMPLETE with openstack-nodes command. Use openstack-node-check command for more detailed states if the state is INCOMPLETE. If you want to reinitialize only a particular compute node, use openstack-node-init command with hostname. For GATEWAY type node, leave it in DEVICE_CREATED state. You'll need additional configurations explained later for gateway nodes.

onos> openstack-nodes Hostname Type Integration Bridge Router Bridge Management IP Data IP VLAN Intf State sona-compute-01 COMPUTE of:00000000000000a1 10.1.1.162 10.1.1.162 COMPLETE sona-compute-02 COMPUTE of:00000000000000a2 10.1.1.163 10.1.1.163 COMPLETE sona-gateway-02 GATEWAY of:00000000000000a4 of:00000000000000b4 10.1.1.165 10.1.1.165 DEVICE_CREATED Total 3 nodes

Gateway Node and ONOS-vRouter Setup

Single Gateway Node Setup

1. For all GATEWAY type nodes, Quagga and additional ONOS instance is required. Let's download and install Docker and required python packages first.

$ wget -qO- https://get.docker.com/ | sudo sh $ sudo apt-get install python-pip -y $ sudo pip install oslo.config $ sudo pip install ipaddress

2. Download sona-setup scripts as well.

$ git clone https://github.com/sonaproject/sona-setup.git $ cd sona-setup

3. Write vRouterConfig.ini and place it under sona-setup directory.

[DEFAULT] routerBridge = "of:00000000000000b1" floatingCidr = "172.27.0.0/24,172.28.0.0/24" dummyHostIp = "172.27.0.1" quaggaMac = "fe:00:00:00:00:01" quaggaIp = "172.18.0.254/30" gatewayName = "gateway-01" bgpNeighborIp = "172.18.0.253/30" asNum = 65101 peerAsNum = 65100 uplinkPortNum = "3"

- line 2, routerBridge: Router bridge device ID configured in the network configuration. It should be unique across the system.

- line 3, floatingCidr: Floating IP address ranges. It can be comma separated list.

- line 4, dummyHostIp: Gateway IP of Floating IP address range. It can be comma separated list.

- line 5, quaggaMac: Local MAC address for peering with external router. It should be unique across the system.

- line 6, quaggaIp: Local IP address for peering with external router. It should be unique across the system.

- line 7, gatewayName: Hostname to be configured in Quagga.

- line 8, bgpNeighborIp: Remote peer's IP address.

- line 9, asNum: Local AS number.

- line 10, peerAsNum: Remote peer's AS number.

- line 11, uplinkPortNum: Port number of uplink interface on br-router bridge.

4. Run createJsonAndvRouter.sh. It will create configurations for vRouter, vrouter.json, and then brings up ONOS container with vRouter application activated.

sona-setup$ ./createJsonAndvRouter.sh sona-setup$ sudo docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES e5ac67e62bbb onosproject/onos:1.6 "./bin/onos-service" 9 days ago Up 9 days 6653/tcp, 8101/tcp, 8181/tcp, 9876/tcp onos-vrouter

5. Next, run createQuagga.sh. It will create Quagga configurations, zebra.conf and bgpd.conf with floating IP ranges, and then brings up Quagga container. It also re-generates vrouter.json with Quagga container's port number and restart ONOS container.

sona-setup$ ./createQuagga.sh sona-setup$ sudo docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 978dadf41240 onosproject/onos:1.6 "./bin/onos-service" 11 hours ago Up 11 hours 6653/tcp, 8101/tcp, 8181/tcp, 9876/tcp onos-vrouter 5bf4f2d59919 hyunsun/quagga-fpm "/usr/bin/supervisord" 11 hours ago Up 11 hours 179/tcp, 2601/tcp, 2605/tcp gateway-01

! -*- bgp -*- ! ! BGPd sample configuration file ! ! hostname gateway-01 password zebra ! router bgp 65101 bgp router-id 172.18.0.254 timers bgp 3 9 neighbor 172.18.0.253 remote-as 65100 neighbor 172.18.0.253 ebgp-multihop neighbor 172.18.0.253 timers connect 5 neighbor 172.18.0.253 advertisement-interval 5 network 172.27.0.0/24 ! log file /var/log/quagga/bgpd.log

! hostname gateway-01 password zebra ! fpm connection ip 172.17.0.2 port 2620

If you check the result of ovs-ofctl show, there should be a new port named quagga on br-router bridge.

sona-setup$ sudo ovs-ofctl show br-router

OFPT_FEATURES_REPLY (xid=0x2): dpid:00000000000000b1

n_tables:254, n_buffers:256

capabilities: FLOW_STATS TABLE_STATS PORT_STATS QUEUE_STATS ARP_MATCH_IP

actions: output enqueue set_vlan_vid set_vlan_pcp strip_vlan mod_dl_src mod_dl_dst mod_nw_src mod_nw_dst mod_nw_tos mod_tp_src mod_tp_dst

1(patch-rout): addr:1a:46:69:5a:8e:f6

config: 0

state: 0

speed: 0 Mbps now, 0 Mbps max

2(quagga): addr:7a:9b:05:57:2c:ff

config: 0

state: 0

current: 10GB-FD COPPER

speed: 10000 Mbps now, 0 Mbps max

LOCAL(br-router): addr:1a:13:72:57:4a:4d

config: PORT_DOWN

state: LINK_DOWN

speed: 0 Mbps now, 0 Mbps max

OFPT_GET_CONFIG_REPLY (xid=0x4): frags=normal miss_send_len=0

6. If there's no external router or emulation of it in your setup, add another Quagga container which acts as an external router by running createQuaggaRouter.sh. It will create Quagga configurations for the external router and then brings up another Quagga container with them. It also fixes the uplink port number from the vRouterConfig.ini and vrouter.json with the newly added external router container's port number and then restarts ONOS.

sona-setup$ ./createQuaggaRouter.sh sona-setup$ sudo docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 978dadf41240 onosproject/onos:1.6 "./bin/onos-service" 11 hours ago Up 11 hours 6653/tcp, 8101/tcp, 8181/tcp, 9876/tcp onos-vrouter 32b10a038d78 hyunsun/quagga-fpm "/usr/bin/supervisord" 11 hours ago Up 11 hours 179/tcp, 2601/tcp, 2605/tcp router-01 5bf4f2d59919 hyunsun/quagga-fpm "/usr/bin/supervisord" 11 hours ago Up 11 hours 179/tcp, 2601/tcp, 2605/tcp gateway-01

! -*- bgp -*- ! ! BGPd sample configuration file ! ! hostname router-01 password zebra ! router bgp 65100 bgp router-id 172.18.0.253 timers bgp 3 9 neighbor 172.18.0.254 remote-as 65101 neighbor 172.18.0.254 ebgp-multihop neighbor 172.18.0.254 timers connect 5 neighbor 172.18.0.254 advertisement-interval 5 neighbor 172.18.0.254 default-originate ! log file /var/log/quagga/bgpd.log

! hostname router-01 password zebra !

If you check the result of ovs-ofctl show, there should be a new port named quagga-router on br-router bridge.

sona-setup$ sudo ovs-ofctl show br-routerOFPT_FEATURES_REPLY (xid=0x2): dpid:00000000000000b1 n_tables:254, n_buffers:256 capabilities: FLOW_STATS TABLE_STATS PORT_STATS QUEUE_STATS ARP_MATCH_IP actions: output enqueue set_vlan_vid set_vlan_pcp strip_vlan mod_dl_src mod_dl_dst mod_nw_src mod_nw_dst mod_nw_tos mod_tp_src mod_tp_dst 1(patch-rout): addr:1a:46:69:5a:8e:f6 config: 0 state: 0 speed: 0 Mbps now, 0 Mbps max 2(quagga): addr:7a:9b:05:57:2c:ff config: 0 state: 0 current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max 3(quagga-router): addr:c6:f5:68:d6:ff:56 config: 0 state: 0 current: 10GB-FD COPPER speed: 10000 Mbps now, 0 Mbps max LOCAL(br-router): addr:1a:13:72:57:4a:4d config: PORT_DOWN state: LINK_DOWN speed: 0 Mbps now, 0 Mbps max OFPT_GET_CONFIG_REPLY (xid=0x4): frags=normal miss_send_len=0

7. Now, check hosts, fpm-connections, next-hops, and routes from ONOS-vRouter. You should be able to see default route (0.0.0.0/0) with next hop of the external router.

onos> hosts id=FA:00:00:00:00:01/None, mac=FA:00:00:00:00:01, location=of:00000000000000b1/25, vlan=None, ip(s)=[172.18.0.253] id=FE:00:00:00:00:02/None, mac=FE:00:00:00:00:02, location=of:00000000000000b1/1, vlan=None, ip(s)=[172.27.0.1], name=FE:00:00:00:00:02/None onos> fpm-connections 172.17.0.3:52332 connected since 6m ago onos> next-hops ip=172.18.0.253, mac=FA:00:00:00:00:01, numRoutes=1 onos> routes Table: ipv4 Network Next Hop 0.0.0.0/0 172.18.0.253 Total: 1 Table: ipv6 Network Next Hop Total: 0

8. Add additional route for the floating IP ranges manually and check routes again.

onos> route-add 172.27.0.0/24 172.27.0.1 onos> routes Table: ipv4 Network Next Hop 0.0.0.0/0 172.18.0.253 172.27.0.0/24 172.27.0.1 Total: 2 Table: ipv6 Network Next Hop Total: 0 onos> next-hops ip=172.18.0.253, mac=FA:00:00:00:00:01, numRoutes=1 ip=172.27.0.1, mac=FE:00:00:00:00:02, numRoutes=1

9. Everything's ready! Try init the gateway node again by running openstack-node-init command from ONOS-SONA.

onos> openstack-node-init gateway-01

onos> openstack-nodes Hostname Type Integration Bridge Router Bridge Management IP Data IP VLAN Intf State sona-compute-01 COMPUTE of:00000000000000a1 10.1.1.162 10.1.1.162 COMPLETE sona-compute-02 COMPUTE of:00000000000000a2 10.1.1.163 10.1.1.163 COMPLETE sona-gateway-02 GATEWAY of:00000000000000a4 of:00000000000000b4 10.1.1.165 10.1.1.165 COMPLETE Total 3 nodes

Multiple Gateway Nodes Setup

SONA allows multiple gateway nodes for scalability as well as HA. Adding additional gatewy node is very easy. Just add the node configuration to ONOS-SONA network configuration and then try init to make the node state DEVICE_CREATED. And then do the same steps with the above single gateway node setup in the new gateway node. Don't forget to put unique value for quaggaMac and quaggaIp. Here is an example configuration of the second gateway node.

[DEFAULT] routerBridge = "of:00000000000000b2" floatingCidr = "172.27.0.0/24" dummyHostIp = "172.27.0.1" quaggaMac = "fe:00:00:00:00:03" quaggaIp = "172.18.0.250/30" gatewayName = "gateway-02" bgpNeighborIp = "172.18.0.249/30" asNum = 65101 peerAsNum = 65100 uplinkPortNum = "3"

You'll have to enable multipath in your external router as well.

router bgp 65100 timers bgp 3 9 distance bgp 20 200 200 maximum-paths 2 ecmp 2 neighbor 172.18.0.254 remote-as 65101 neighbor 172.18.0.254 maximum-routes 12000 neighbor 172.18.0.250 remote-as 65101 neighbor 172.18.0.250 maximum-routes 12000 redistribute connected

#routed port connected to gateway-01 interface Ethernet43 no switchport ip address 172.18.0.253/30 #routed port connected to gateway-02 interface Ethernet44 no switchport ip address 172.18.0.249/30

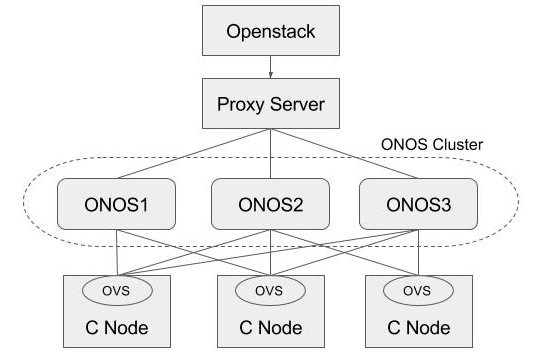

HA Setup

Basically, ONOS itself provides HA by default when there are multiple instances in the cluster. This section describes how to add a proxy server beyond the ONOS cluster, and make use of it in Neutron as a single access point of the cluster. For the proxy server, we used the HA proxy server (http://www.haproxy.org) here.

1. Install HA proxy.

$ sudo add-apt-repository -y ppa:vbernat/haproxy-1.5 $ sudo add-apt-repository -y ppa:vbernat/haproxy-1.5 $ sudo apt-get update $ sudo apt-get install -y haproxy

2. Configure HA proxy.

global

log /dev/log local0

log /dev/log local1 notice

chroot /var/lib/haproxy

stats socket /run/haproxy/admin.sock mode 660 level admin

stats timeout 30s

user haproxy

group haproxy

daemon

# Default SSL material locations

ca-base /etc/ssl/certs

crt-base /etc/ssl/private

# Default ciphers to use on SSL-enabled listening sockets.

# For more information, see ciphers(1SSL). This list is from:

# https://hynek.me/articles/hardening-your-web-servers-ssl-ciphers/

ssl-default-bind-ciphers ECDH+AESGCM:DH+AESGCM:ECDH+AES256:DH+AES256:ECDH+AES128:DH+AES:ECDH+3DES:DH+3DES:RSA+AESGCM:RSA+AES:RSA+3DES:!aNULL:!MD5:!DSS

ssl-default-bind-options no-sslv3

defaults

log global

mode http

option httplog

option dontlognull

timeout connect 5000

timeout client 50000

timeout server 50000

errorfile 400 /etc/haproxy/errors/400.http

errorfile 403 /etc/haproxy/errors/403.http

errorfile 408 /etc/haproxy/errors/408.http

errorfile 500 /etc/haproxy/errors/500.http

errorfile 502 /etc/haproxy/errors/502.http

errorfile 503 /etc/haproxy/errors/503.http

errorfile 504 /etc/haproxy/errors/504.http

frontend localnodes

bind *:8181

mode http

default_backend nodes

backend nodes

mode http

balance roundrobin

option forwardfor

http-request set-header X-Forwarded-Port %[dst_port]

http-request add-header X-Forwarded-Proto https if { ssl_fc }

option httpchk GET /onos/ui/login.html

server web01 [onos-01 IP address]:8181 check

server web02 [onos-02 IP address]:8181 check

server web03 [onos-03 IP address]:8181 check

listen stats *:1936

stats enable

stats uri /

stats hide-version

stats auth someuser:password

3. Set url_path to point to the proxy server in Neutron ML2 ONOS mechanism driver configuration and restart Neutron.

# Configuration options for ONOS ML2 Mechanism driver [onos] # (StrOpt) ONOS ReST interface URL. This is a mandatory field. url_path = http://[proxy-server IP]:8181/onos/openstackswitching # (StrOpt) Username for authentication. This is a mandatory field. username = onos # (StrOpt) Password for authentication. This is a mandatory field. password = rocks

4. Stop one of the ONOS instance and check everything works fine.

$ onos-service $OC1 stop