This page explains how to set up and use the CORD-VTN service.

You will need:

- An ONOS cluster installed and running (see ONOS documentation to get to this point)

- An OpenStack service installed and running (detailed OpenStack configurations are described here)

- (TODO: An XOS installed and running)

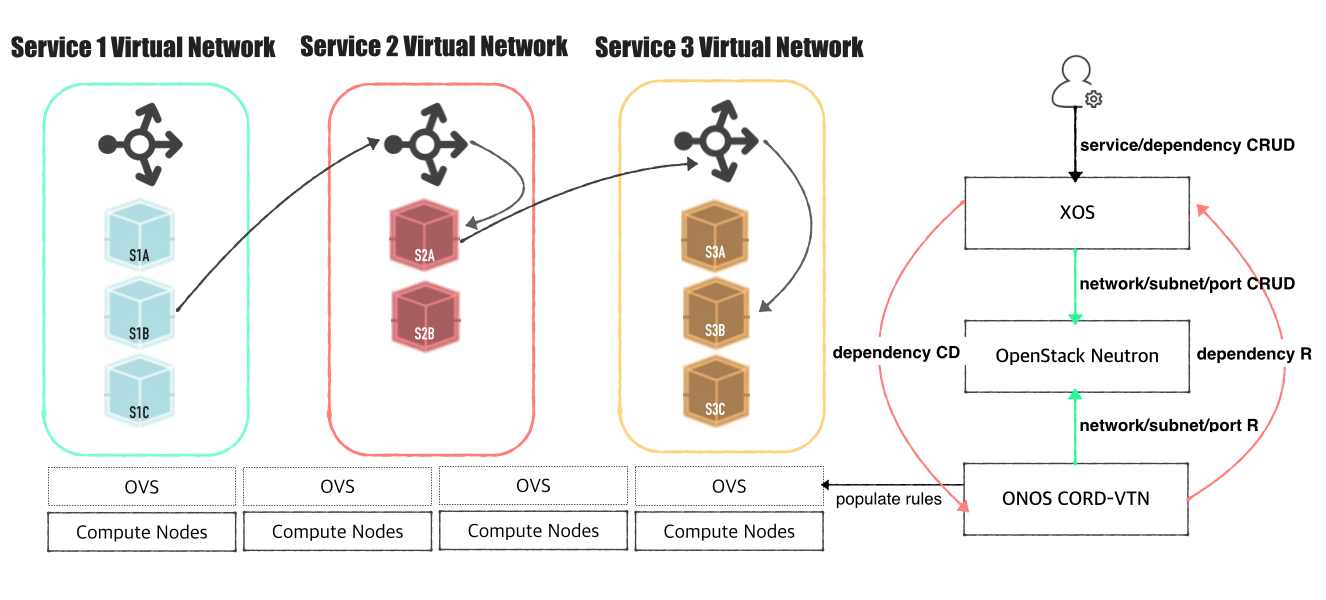

Architecture

The high level architecture of the system is shown in the following figure.

OpenStack Settings

You can find various setups and ways to build OpenStack from the Internet. Instructions described here include only the notable things to use CORD VTN service.

You will need,

- Controller cluster: at least one 4G RAM machine, runs DB, message queue server, OpenStack services including Nova, Neutron, Glance and Keystone.

- Compute nodes: at least one 2G RAM machine, runs nova-compute agent only. (Please don't run Neutron ovs-agent in compute node)

Controller Node

Install networking-onos(Neutron ML2 plugin for ONOS) first.

$ git clone https://github.com/openstack/networking-onos.git $ cd networking-onos $ sudo python setup.py install

Specify ONOS access information. You may want to copy the config file to /etc/neutron/plugins/ml2/ where the other Neutron configuration files are.

# Configuration options for ONOS ML2 Mechanism driver [onos] # (StrOpt) ONOS ReST interface URL. This is a mandatory field. url_path = http://onos.instance.ip.addr:8181/onos/openstackswitching # (StrOpt) Username for authentication. This is a mandatory field. username = onos # (StrOpt) Password for authentication. This is a mandatory field. password = rocks

Set Neutron to use ONOS ML2 plugin and disable DHCP agent notification.

core_plugin = neutron.plugins.ml2.plugin.Ml2Plugin dhcp_agent_notification = always

[ml2] tenant_network_types = vxlan type_drivers = vxlan mechanism_drivers = onos_ml2 [ml2_type_vxlan] vni_ranges = 1001:2000

Set Nova to use config drive for metadata service, so that we don't need to launch Neutron metadata-agent.

[DEFAULT] force_config_drive = always network_api_class = nova.network.neutronv2.api.API [neutron] url = http://[controller_ip]:9696 auth_strategy = keystone admin_auth_url = http://[controller_ip]:35357/v2.0 admin_tenant_name = service admin_username = neutron admin_password = [admin passwd]

All the other configurations are up to your development settings. Don't forget to specify conf_onos.ini when you launch Neutron service.

/usr/bin/python /usr/local/bin/neutron-server --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini --config-file /opt/stack/networking-onos/etc/conf_onos.ini

Here's sample DevStack local.conf.

[[local|localrc]] HOST_IP=10.134.231.28 SERVICE_HOST=10.134.231.28 RABBIT_HOST=10.134.231.28 DATABASE_HOST=10.134.231.28 Q_HOST=10.134.231.28 ADMIN_PASSWORD=nova DATABASE_PASSWORD=$ADMIN_PASSWORD RABBIT_PASSWORD=$ADMIN_PASSWORD SERVICE_PASSWORD=$ADMIN_PASSWORD SERVICE_TOKEN=$ADMIN_PASSWORD DATABASE_TYPE=mysql # Log SCREEN_LOGDIR=/opt/stack/logs/screen # Images IMAGE_URLS="http://cloud-images.ubuntu.com/releases/precise/release/ubuntu-12.04-server-cloudimg-amd64.tar.gz" FORCE_CONFIG_DRIVE=always NEUTRON_CREATE_INITIAL_NETWORKS=False Q_ML2_PLUGIN_MECHANISM_DRIVERS=onos_ml2 Q_PLUGIN_EXTRA_CONF_PATH=/opt/stack/networking-onos/etc Q_PLUGIN_EXTRA_CONF_FILES=(conf_onos.ini) # Services enable_service q-svc disable_service n-net disable_service n-cpu disable_service tempest disable_service c-sch disable_service c-api disable_service c-vol

Compute Node

No special configurations are required for compute node. Just launch nova-compute agent with appropriate hypervisor settings.

Here's sample DevStack local.conf.

[[local|localrc]] HOST_IP=10.134.231.30 <-- local IP SERVICE_HOST=162.243.x.x <-- controller IP, must be reachable from your test browser for console access from Horizon RABBIT_HOST=10.134.231.28 DATABASE_HOST=10.134.231.28 ADMIN_PASSWORD=nova DATABASE_PASSWORD=$ADMIN_PASSWORD RABBIT_PASSWORD=$ADMIN_PASSWORD SERVICE_PASSWORD=$ADMIN_PASSWORD SERVICE_TOKEN=$ADMIN_PASSWORD DATABASE_TYPE=mysql NOVA_VNC_ENABLED=True VNCSERVER_PROXYCLIENT_ADDRESS=$HOST_IP VNCSERVER_LISTEN=$HOST_IP # Log SCREEN_LOGDIR=/opt/stack/logs/screen # Images IMAGE_URLS="http://cloud-images.ubuntu.com/releases/precise/release/ubuntu-12.04-server-cloudimg-amd64.tar.gz" LIBVIRT_TYPE=kvm # Services ENABLED_SERVICES=n-cpu,neutron

If your compute node is a VM, try http://docs.openstack.org/developer/devstack/guides/devstack-with-nested-kvm.html this first or set LIBVIRT_TYPE=qemu. Nested KVM is much faster than qemu, if possible.

Other Settings

1. Set OVSDB listening mode in your compute nodes. There are two ways.

$ ovs-appctl -t ovsdb-server ovsdb-server/add-remote ptcp:6640:host_ip

Or you can make the setting permanent by adding the following line to /usr/share/openvswitch/scripts/ovs-ctl, right after "set ovsdb-server "$DB_FILE" line. You need to restart the openvswitch-switch service.

set "$@" --remote=ptcp:6640

In either way, you should be able to see port "6640" is in listening state.

$ netstat -ntl Active Internet connections (only servers) Proto Recv-Q Send-Q Local Address Foreign Address State tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN tcp 0 0 0.0.0.0:6640 0.0.0.0:* LISTEN tcp6 0 0 :::22

2. Check your OVSDB is clean.

$ sudo ovs-vsctl show

cedbbc0a-f9a4-4d30-a3ff-ef9afa813efb

ovs_version: "2.3.0"

3. Make sure that ONOS user(sdn by default) can SSH from ONOS instance to compute nodes with key.

ONOS Settings

Add the following configurations to your ONOS network-cfg.json. If you don't have fabric controller and vRouter setups, you may want to read "SSH to VM/Access Internet from VM" part also before creating network-cfg.json file. One assumption here is that all compute nodes have the same configurations for OVSDB port, SSH port, and account for SSH.

| Config Name | Descriptions |

|---|---|

| gatewayMac | MAC address of virtual network gateway |

| localManagementIp | Management IP for a compute node and VM connection, must be CIDR notation |

| ovsdbPort | Port number for OVSDB connection (OVSDB uses 6640 by default) |

| sshPort | Port number for SSH connection |

| sshUser | SSH user name |

| sshKeyFile | Private key file for SSH |

| nodes | list of compute node information |

| nodes: hostname | hostname of the compute node, should be unique throughout the service |

| nodes: hostManagementIp | Management IP for a head node and compute node, it is used for OpenFlow, OVSDB, and SSH session. Must be CIDR notation. |

| nodes: dataPlaneIp | Data plane IP address, this IP is used for VXLAN tunneling |

| nodes: dataPlaneIntf | Name of physical interface used for tunneling |

| nodes: bridgeId | Device ID of the integration bridge (br-int) |

{

"apps" : {

"org.onosproject.cordvtn" : {

"cordvtn" : {

"gatewayMac" : "00:00:00:00:00:01",

"localManagementIp" : "172.27.0.1/24",

"ovsdbPort" : "6640",

"sshPort" : "22",

"sshUser" : "hyunsun",

"sshKeyFile" : "~/.ssh/id_rsa",

"nodes" : [

{

"hostname" : "compute-01",

"hostManagementIp" : "10.55.25.244/24",

"dataPlaneIp" : "10.134.34.222/16",

"dataPlaneIntf" : "eth1",

"bridgeId" : "of:0000000000000001"

},

{

"hostname" : "compute-02",

"hostManagementIp" : "10.241.229.42/24",

"dataPlaneIp" : "10.134.34.223/16",

"dataPlaneIntf" : "eth1",

"bridgeId" : "of:0000000000000002"

}

]

}

},

"org.onosproject.openstackswitching" : {

"openstackswitching" : {

"do_not_push_flows" : "true",

"neutron_server" : "http://10.243.139.46:9696/v2.0/",

"keystone_server" : "http://10.243.139.46:5000/v2.0/",

"user_name" : "admin",

"password" : "nova"

}

}

}

}

You should set your ONOS to activate at least the following applications.

ONOS_APPS=drivers,drivers.ovsdb,openflow-base,lldpprovider,cordvtn

How To Test

Before you start

Once OpenStack and ONOS with CORD VTN app start successfully, you should check the system is ready.

1. Check your ONOS instance IPs are correctly set to the management IP. It should be accessible from compute nodes.

onos> nodes id=10.203.255.221, address=159.203.255.221:9876, state=ACTIVE, updated=21h ago * id=10.243.151.127, address=162.243.151.127:9876, state=ACTIVE, updated=21h ago id=10.199.96.13, address=198.199.96.13:9876, state=ACTIVE, updated=21h ago

2. Check your compute nodes are registered to CordVtn service and in init COMPLETE state.

onos> cordvtn-nodes hostname=compute-01, hostMgmtIp=10.55.25.244/24, dpIp=10.134.34.222/16, br-int=of:0000000000000001, dpIntf=eth1, init=COMPLETE hostname=compute-02, hostMgmtIp=10.241.229.42/24, dpIp=10.134.34.223/16, br-int=of:0000000000000002, dpIntf=eth1, init=INCOMPLETE Total 2 nodes

If the nodes listed in your network-cfgf.json do not show in the result, try to push network-cfg.json to ONOS with REST API.

curl --user onos:rocks -X POST -H "Content-Type: application/json" http://onos-01:8181/onos/v1/network/configuration/ -d @network-cfg.json

If all the nodes are listed but some of them are in "INCOMPLETE" state, check what is the problem with it and fix it.

Once you fix the problem, push the network-cfg.json again to trigger init for all nodes(it is no harm to init again with COMPLETE state nodes) or use "cordvtn-node-init" command.

onos> cordvtn-node-check compute-02 Integration bridge created/connected : OK (br-int) VXLAN interface created : OK Data plane interface added : NO (eth0) IP address set to br-int : NO (10.134.34.222/16)

3. Make sure all virtual switches on compute nodes are added and available in ONOS.

onos> devices id=of:0000000000000001, available=true, role=MASTER, type=SWITCH, mfr=Nicira, Inc., hw=Open vSwitch, sw=2.0.2, serial=None, managementAddress=compute.01.ip.addr, protocol=OF_13, channelId=compute.01.ip.addr:39031 id=of:0000000000000002, available=true, role=STANDBY, type=SWITCH, mfr=Nicira, Inc., hw=Open vSwitch, sw=2.0.2, serial=None, managementAddress=compute.02.ip.addr, protocol=OF_13, channelId=compute.02.ip.addr:44920

During the initialization process, OVSDB devices can be shown, for example ovsdb:10.241.229.42, when you list devices in your ONOS. Once it's done with node initialization, these OVSDB devices are removed and only OpenFlow devices are shown.

Now, it's ready.

Without XOS

1. Test VMs in a same network can talk to each other

You can test virtual networks and service chaining without XOS. First, create a network through OpenStack Horizon or OpenStack CLI.

Network name should include one of the following five network types. (You may choose anyone right now)

- private

- private_direct

- private_indirect

- public_direct

- public_indirect

- management

$ neutron net-create net-A-private $ neutron subnet-create net-A-private 10.0.0.0/24

Then create VMs with the network.

$ nova boot --image f04ed5f1-3784-4f80-aee0-6bf83912c4d0 --flavor 1 --nic net-id=aaaf70a4-f2b2-488e-bffe-63654f7b8a82 net-A-vm-01

To access VM, you should pass --key-name or the following script as a --user-data, which sets password of "ubuntu" account to "ubuntu".

#cloud-config

password: ubuntu

chpasswd: { expire: False }

ssh_pwauth: True

You can access VM through Web Console, virsh console with some tricks(https://github.com/hyunsun/documentations/wiki/Access-OpenStack-VM-through-virsh-console) or if you do "Management Network" part, you can ssh to VM from the compute node.

Now, test VMs can ping to each other.

2. Test VMs in a different network cannot talk to each other

Create another network, for example net-B-private, and create another VM with the network. Now, test the VM cannot ping to the network net-A-private.

3. Test service chaining

Enable ip_forward in your VMs.

$ sudo sysctl net.ipv4.ip_forward=1

Create service dependency with the following REST API.

$ curl -X POST -u onos:rocks -H "Content-Type:application/json" http://[onos_ip]:8181/onos/cordvtn/service-dependency/[net-A-UUID]/[net-B-UUID]/b

Now, ping from net-A-private VM to gateway IP address of net-B. There will not be a reply but if you tcpdump in net-B-private VMs, you can see one of the VMs in net-B-private gets the packets from net-A-private VM. To remove the service dependency, send another REST call with DELETE.

$ curl -X DELETE -u onos:rocks -H "Content-Type:application/json" http://[onos_ip]:8181/onos/cordvtn/service-dependency/[net-A-UUID]/[net-B-UUID]

Check the following video how service chaining works (service chaining demo starts from 42:00)

With XOS

[TODO]

Management Network

If you need to SSH to VM directly from compute node, create and attach management network to the VM as a second interface. Management network should contain "management" in the network name.

1. Create a management network and subnet which you specified as the "localManagementIp" in your network-cfg.json

$ neutron net-create management $ neutron subnet-create management 172.27.0.1/24

2. Create a VM with service network and management network. Management network must be the second interface!

$ nova boot --image f04ed5f1-3784-4f80-aee0-6bf83912c4d0 --flavor 1 --nic net-id=aaaf70a4-f2b2-488e-bffe-63654f7b8a82 --nic net-id=0cd5a64f-99a3-45a3-9a78-7656e9f4873a net-A-vm-01

3. Once VM is up, enable DHCP for the second interface.

$ sudo dhclient eth1

Now, you can access VM from compute node.

REST APIs

Here's the list of REST APIs that CORD-VTN provides.

| Method | Path | Description |

|---|---|---|

| POST | onos/cordvtn/service-dependency/{tenant service network UUID}/{provider service netework UUID} | Creates a service dependency with unidirectional access from a tenant service to a provider service |

| POST | onos/cordvtn/service-dependency/{tenant service network UUID}/{provider service netework UUID}/[u/b] | Creates a service dependency with access type extension. "u" is implied to a unidirectional access from a tenant service to a provider service and "b" for bidirectional access between two services. |

| DELETE | onos/cordvtn/service-dependency/{tenant service network UUID}/{provider service netework UUID} | Removes services dependency from a tenant service to a provider service. |